Stages

Introduction

A stage is a physical unit of execution. It is a step in a physical execution plan.

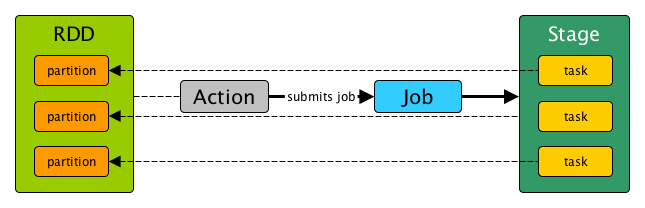

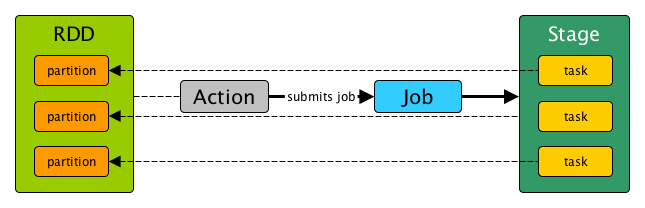

A stage is a set of parallel tasks, one per partition of an RDD, that compute partial results of a function executed as part of a Spark job.

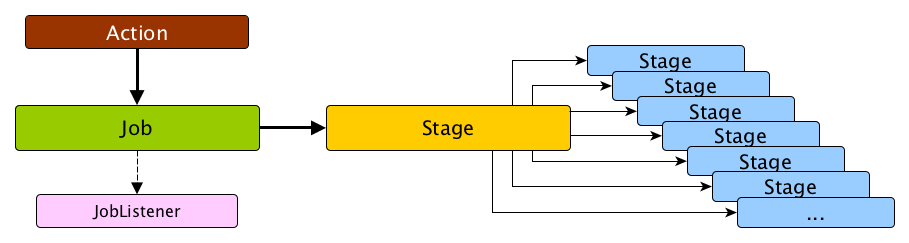

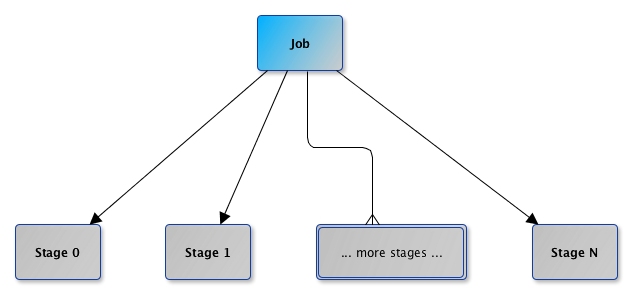

In other words, a Spark job is a computation with that computation sliced into stages.

A stage is uniquely identified by id. When a stage is created, DAGScheduler increments internal counter nextStageId to track the number of stage submissions.

A stage can only work on the partitions of a single RDD (identified by rdd), but can be associated with many other dependent parent stages (via internal field parents), with the boundary of a stage marked by shuffle dependencies.

Submitting a stage can therefore trigger execution of a series of dependent parent stages (refer to RDDs, Job Execution, Stages, and Partitions).

Finally, every stage has a firstJobId that is the id of the job that submitted the stage.

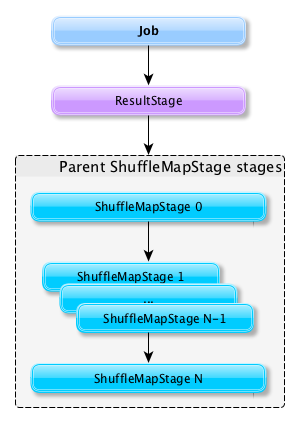

There are two types of stages:

-

ShuffleMapStage is an intermediate stage (in the execution DAG) that produces data for other stage(s). It writes map output files for a shuffle. It can also be the final stage in a job in adaptive query planning.

-

ResultStage is the final stage that executes a Spark action in a user program by running a function on an RDD.

When a job is submitted, a new stage is created with the parent ShuffleMapStage linked — they can be created from scratch or linked to, i.e. shared, if other jobs use them already.

A stage knows about the jobs it belongs to (using the internal field jobIds).

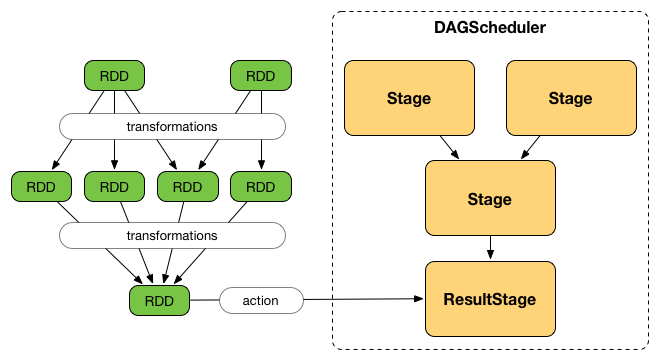

DAGScheduler splits up a job into a collection of stages. Each stage contains a sequence of narrow transformations that can be completed without shuffling the entire data set, separated at shuffle boundaries, i.e. where shuffle occurs. Stages are thus a result of breaking the RDD graph at shuffle boundaries.

Shuffle boundaries introduce a barrier where stages/tasks must wait for the previous stage to finish before they fetch map outputs.

RDD operations with narrow dependencies, like map() and filter(), are pipelined together into one set of tasks in each stage, but operations with shuffle dependencies require multiple stages, i.e. one to write a set of map output files, and another to read those files after a barrier.

In the end, every stage will have only shuffle dependencies on other stages, and may compute multiple operations inside it. The actual pipelining of these operations happens in the RDD.compute() functions of various RDDs, e.g. MappedRDD, FilteredRDD, etc.

At some point of time in a stage’s life, every partition of the stage gets transformed into a task - ShuffleMapTask or ResultTask for ShuffleMapStage and ResultStage, respectively.

Partitions are computed in jobs, and result stages may not always need to compute all partitions in their target RDD, e.g. for actions like first() and lookup().

DAGScheduler prints the following INFO message when there are tasks to submit:

INFO DAGScheduler: Submitting 1 missing tasks from ResultStage 36 (ShuffledRDD[86] at reduceByKey at <console>:24)There is also the following DEBUG message with pending partitions:

DEBUG DAGScheduler: New pending partitions: Set(0)Tasks are later submitted to Task Scheduler (via taskScheduler.submitTasks).

When no tasks in a stage can be submitted, the following DEBUG message shows in the logs:

FIXMEnumTasks - where and what

|

Caution

|

FIXME Why do stages have numTasks? Where is this used? How does this correspond to the number of partitions in a RDD?

|

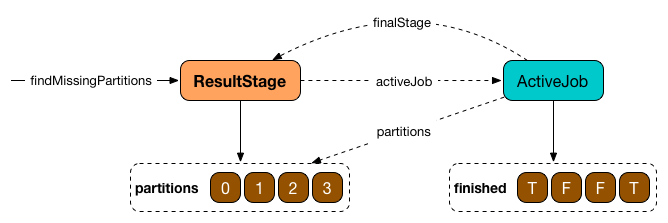

Stage.findMissingPartitions

Stage.findMissingPartitions() calculates the ids of the missing partitions, i.e. partitions for which the ActiveJob knows they are not finished (and so they are missing).

A ResultStage stage knows it by querying the active job about partition ids (numPartitions) that are not finished (using ActiveJob.finished array of booleans).

In the above figure, partitions 1 and 2 are not finished (F is false while T is true).

Stage.failedOnFetchAndShouldAbort

Stage.failedOnFetchAndShouldAbort(stageAttemptId: Int): Boolean checks whether the number of fetch failed attempts (using fetchFailedAttemptIds) exceeds the number of consecutive failures allowed for a given stage (that should then be aborted)

|

Note

|

The number of consecutive failures for a stage is not configurable. |