import org.apache.spark.{SparkConf, SparkContext}

// 1. Create Spark configuration

val conf = new SparkConf()

.setAppName("SparkMe Application")

.setMaster("local[*]") // local mode

// 2. Create Spark context

val sc = new SparkContext(conf)Inside Creating SparkContext

This document describes what happens when you create a new SparkContext.

|

Note

|

The example uses Spark in local mode, but the initialization with the other cluster modes would follow similar steps. |

Creating SparkContext instance starts by setting the internal allowMultipleContexts field with the value of spark.driver.allowMultipleContexts and marking this SparkContext instance as partially constructed. It makes sure that no other thread is creating a SparkContext instance in this JVM. It does so by synchronizing on SPARK_CONTEXT_CONSTRUCTOR_LOCK and using the internal atomic reference activeContext (that eventually has a fully-created SparkContext instance).

|

Note

|

The entire code of |

startTime is set to the current time in milliseconds.

stopped internal flag is set to false.

The very first information printed out is the version of Spark as an INFO message:

INFO SparkContext: Running Spark version 2.0.0-SNAPSHOT|

Tip

|

You can use version method to learn about the current Spark version or org.apache.spark.SPARK_VERSION value.

|

A LiveListenerBus instance is created (as listenerBus).

The current user name is computed.

|

Caution

|

FIXME Where is sparkUser used?

|

It saves the input SparkConf (as _conf).

|

Caution

|

FIXME Review _conf.validateSettings()

|

It ensures that the first mandatory setting - spark.master is defined. SparkException is thrown if not.

A master URL must be set in your configurationIt ensures that the other mandatory setting - spark.app.name is defined. SparkException is thrown if not.

An application name must be set in your configurationFor Spark on YARN in cluster deploy mode, it checks existence of spark.yarn.app.id. SparkException is thrown if it does not exist.

Detected yarn cluster mode, but isn't running on a cluster. Deployment to YARN is not supported directly by SparkContext. Please use spark-submit.|

Caution

|

FIXME How to "trigger" the exception? What are the steps? |

When spark.logConf is enabled SparkConf.toDebugString is called.

|

Note

|

SparkConf.toDebugString is called very early in the initialization process and other settings configured afterwards are not included. Use sc.getConf.toDebugString once SparkContext is initialized.

|

The driver’s host and port are set if missing. spark.driver.host becomes the value of Utils.localHostName (or an exception is thrown) while spark.driver.port is set to 0.

|

Note

|

spark.driver.host and spark.driver.port are expected to be set on the driver. It is later asserted by SparkEnv.createDriverEnv. |

spark.executor.id setting is set to driver.

|

Tip

|

Use sc.getConf.get("spark.executor.id") to know where the code is executed — driver or executors.

|

It sets the jars and files based on spark.jars and spark.files, respectively. These are files that are required for proper task execution on executors.

If event logging is enabled, i.e. spark.eventLog.enabled flag is true, the internal field _eventLogDir is set to the value of spark.eventLog.dir setting or the default value /tmp/spark-events. Also, if spark.eventLog.compress is enabled (it is not by default), the short name of the CompressionCodec is assigned to _eventLogCodec. The config key is spark.io.compression.codec (default: lz4).

|

Tip

|

Read about compression codecs in Compression. |

It sets spark.externalBlockStore.folderName to the value of externalBlockStoreFolderName.

|

Caution

|

FIXME: What’s externalBlockStoreFolderName?

|

A JobProgressListener is created and registered to LiveListenerBus.

MetadataCleaner is created.

|

Caution

|

FIXME What’s MetadataCleaner? |

Optional ConsoleProgressBar with SparkStatusTracker are created.

SparkUI creates a web UI (as _ui) if the property spark.ui.enabled is enabled (i.e. true).

|

Caution

|

FIXME Where’s _ui used?

|

A Hadoop configuration is created. See Hadoop Configuration.

If there are jars given through the SparkContext constructor, they are added using addJar. Same for files using addFile.

At this point in time, the amount of memory to allocate to each executor (as _executorMemory) is calculated. It is the value of spark.executor.memory setting, or SPARK_EXECUTOR_MEMORY environment variable (or currently-deprecated SPARK_MEM), or defaults to 1024.

_executorMemory is later available as sc.executorMemory and used for LOCAL_CLUSTER_REGEX, Spark Standalone’s SparkDeploySchedulerBackend, to set executorEnvs("SPARK_EXECUTOR_MEMORY"), MesosSchedulerBackend, CoarseMesosSchedulerBackend.

The value of SPARK_PREPEND_CLASSES environment variable is included in executorEnvs.

|

Caution

|

|

The Mesos scheduler backend’s configuration is included in executorEnvs, i.e. SPARK_EXECUTOR_MEMORY, _conf.getExecutorEnv, and SPARK_USER.

HeartbeatReceiver RPC endpoint is registered (as _heartbeatReceiver).

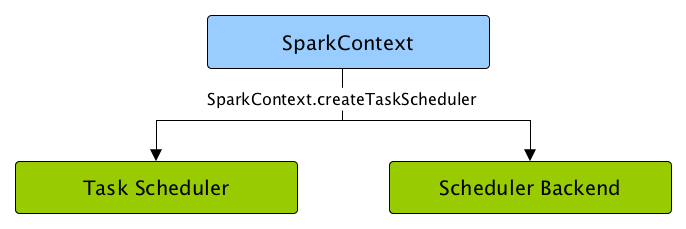

SparkContext.createTaskScheduler is executed (using the master URL) and the result becomes the internal _schedulerBackend and _taskScheduler.

|

Note

|

The internal _schedulerBackend and _taskScheduler are used by schedulerBackend and taskScheduler methods, respectively.

|

DAGScheduler is created (as _dagScheduler).

SparkContext sends a blocking TaskSchedulerIsSet message to HeartbeatReceiver RPC endpoint (to inform that the TaskScheduler is now available).

The internal fields, _applicationId and _applicationAttemptId, are set (using applicationId and applicationAttemptId from the TaskScheduler Contract).

The setting spark.app.id is set to the current application id and Web UI gets notified about it if used (using setAppId(_applicationId)).

The BlockManager (for the driver) is initialized (with _applicationId).

|

Caution

|

FIXME Why should UI know about the application id? |

MetricsSystem is started (after the application id is set using spark.app.id).

|

Caution

|

FIXME Why does Metric System need the application id? |

The driver’s metrics (servlet handler) are attached to the web ui after the metrics system is started.

_eventLogger is created and started if isEventLogEnabled. It uses EventLoggingListener that gets registered to LiveListenerBus.

|

Caution

|

FIXME Why is _eventLogger required to be the internal field of SparkContext? Where is this used?

|

If dynamic allocation is enabled, ExecutorAllocationManager is created (as _executorAllocationManager) and immediately started.

|

Note

|

_executorAllocationManager is exposed (as a method) to YARN scheduler backends to reset their state to the initial state.

|

_cleaner is set to ContextCleaner if spark.cleaner.referenceTracking is enabled (i.e. true). By default it is enabled.

|

Caution

|

FIXME It’d be quite useful to have all the properties with their default values in sc.getConf.toDebugString, so when a configuration is not included but does change Spark runtime configuration, it should be added to _conf.

|

postEnvironmentUpdate is called that posts SparkListenerEnvironmentUpdate message on LiveListenerBus with information about Task Scheduler’s scheduling mode, added jar and file paths, and other environmental details. They are displayed in web UI’s Environment tab.

SparkListenerApplicationStart message is posted to LiveListenerBus (using the internal postApplicationStart method).

|

Note

|

TaskScheduler.postStartHook does nothing by default, but the only implementation TaskSchedulerImpl comes with its own postStartHook and blocks the current thread until a SchedulerBackend is ready.

|

MetricsSystem is requested to register the following sources:

ShutdownHookManager.addShutdownHook() is called to do SparkContext’s cleanup.

|

Caution

|

FIXME What exactly does ShutdownHookManager.addShutdownHook() do?

|

Any non-fatal Exception leads to termination of the Spark context instance.

|

Caution

|

FIXME What does NonFatal represent in Scala?

|

nextShuffleId and nextRddId start with 0.

|

Caution

|

FIXME Where are nextShuffleId and nextRddId used?

|

A new instance of Spark context is created and ready for operation.

Creating SchedulerBackend and TaskScheduler (createTaskScheduler method)

createTaskScheduler(

sc: SparkContext,

master: String,

deployMode: String): (SchedulerBackend, TaskScheduler)The private createTaskScheduler is executed as part of creating an instance of SparkContext to create TaskScheduler and SchedulerBackend objects.

It uses the master URL to select right implementations.

createTaskScheduler understands the following master URLs:

-

local- local mode with 1 thread only -

local[n]orlocal[*]- local mode withnthreads. -

local[n, m]orlocal[*, m]— local mode withnthreads andmnumber of failures. -

spark://hostname:portfor Spark Standalone. -

local-cluster[n, m, z]— local cluster withnworkers,mcores per worker, andzmemory per worker. -

mesos://hostname:portfor Spark on Apache Mesos. -

any other URL is passed to

getClusterManagerto load an external cluster manager.

|

Caution

|

FIXME |

Loading External Cluster Manager for URL (getClusterManager method)

getClusterManager(url: String): Option[ExternalClusterManager]getClusterManager loads ExternalClusterManager that can handle the input url.

If there are two or more external cluster managers that could handle url, a SparkException is thrown:

Multiple Cluster Managers ([serviceLoaders]) registered for the url [url].|

Note

|

getClusterManager uses Java’s ServiceLoader.load method.

|

|

Note

|

getClusterManager is used to find a cluster manager for a master URL when creating a SchedulerBackend and a TaskScheduler for the driver.

|

setupAndStartListenerBus

setupAndStartListenerBus(): UnitsetupAndStartListenerBus is an internal method that reads spark.extraListeners setting from the current SparkConf to create and register SparkListenerInterface listeners.

It expects that the class name represents a SparkListenerInterface listener with one of the following constructors (in this order):

-

a single-argument constructor that accepts SparkConf

-

a zero-argument constructor

setupAndStartListenerBus registers every listener class.

You should see the following INFO message in the logs:

INFO Registered listener [className]It starts LiveListenerBus and records it in the internal _listenerBusStarted.

When no single-SparkConf or zero-argument constructor could be found for a class name in spark.extraListeners setting, a SparkException is thrown with the message:

[className] did not have a zero-argument constructor or a single-argument constructor that accepts SparkConf. Note: if the class is defined inside of another Scala class, then its constructors may accept an implicit parameter that references the enclosing class; in this case, you must define the listener as a top-level class in order to prevent this extra parameter from breaking Spark's ability to find a valid constructor.Any exception while registering a SparkListenerInterface listener stops the SparkContext and a SparkException is thrown and the source exception’s message.

Exception when registering SparkListener|

Tip

|

Set |

Creating SparkEnv for Driver (createSparkEnv method)

createSparkEnv(

conf: SparkConf,

isLocal: Boolean,

listenerBus: LiveListenerBus): SparkEnvcreateSparkEnv simply delegates the call to SparkEnv to create a SparkEnv for the driver.

It calculates the number of cores to 1 for local master URL, the number of processors available for JVM for * or the exact number in the master URL, or 0 for the cluster master URLs.

Utils.getCurrentUserName

getCurrentUserName(): StringgetCurrentUserName computes the user name who has started the SparkContext instance.

|

Note

|

It is later available as SparkContext.sparkUser. |

Internally, it reads SPARK_USER environment variable and, if not set, reverts to Hadoop Security API’s UserGroupInformation.getCurrentUser().getShortUserName().

|

Note

|

It is another place where Spark relies on Hadoop API for its operation. |

Utils.localHostName

localHostName computes the local host name.

It starts by checking SPARK_LOCAL_HOSTNAME environment variable for the value. If it is not defined, it uses SPARK_LOCAL_IP to find the name (using InetAddress.getByName). If it is not defined either, it calls InetAddress.getLocalHost for the name.

|

Note

|

Utils.localHostName is executed while SparkContext is being created.

|

|

Caution

|

FIXME Review the rest. |

stopped flag

|

Caution

|

FIXME Where is this used? |